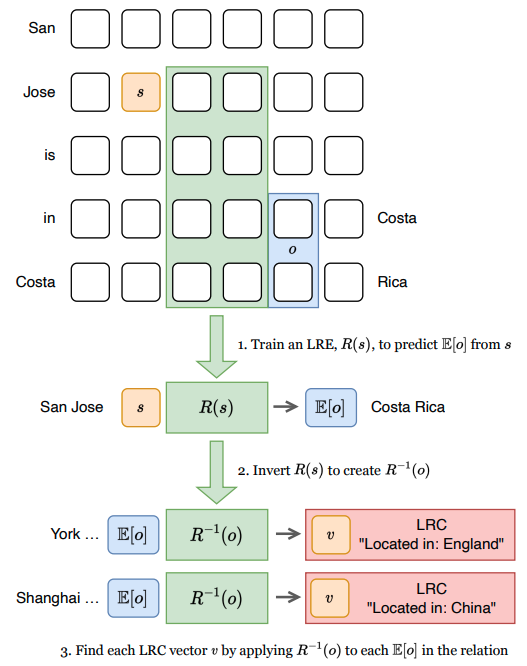

Excited to share that our paper 'Identifying Linear Relational Concepts in LLMs' with Oana-Maria Camburu and Anthony Hunter has been accepted to #NAACL2024 ! Details 👇

Paper: arxiv.org/abs/2311.08968

Code: github.com/chanind/linear…

See you in Mexico! 🇲🇽

#XAI #MechanisticInterpretability

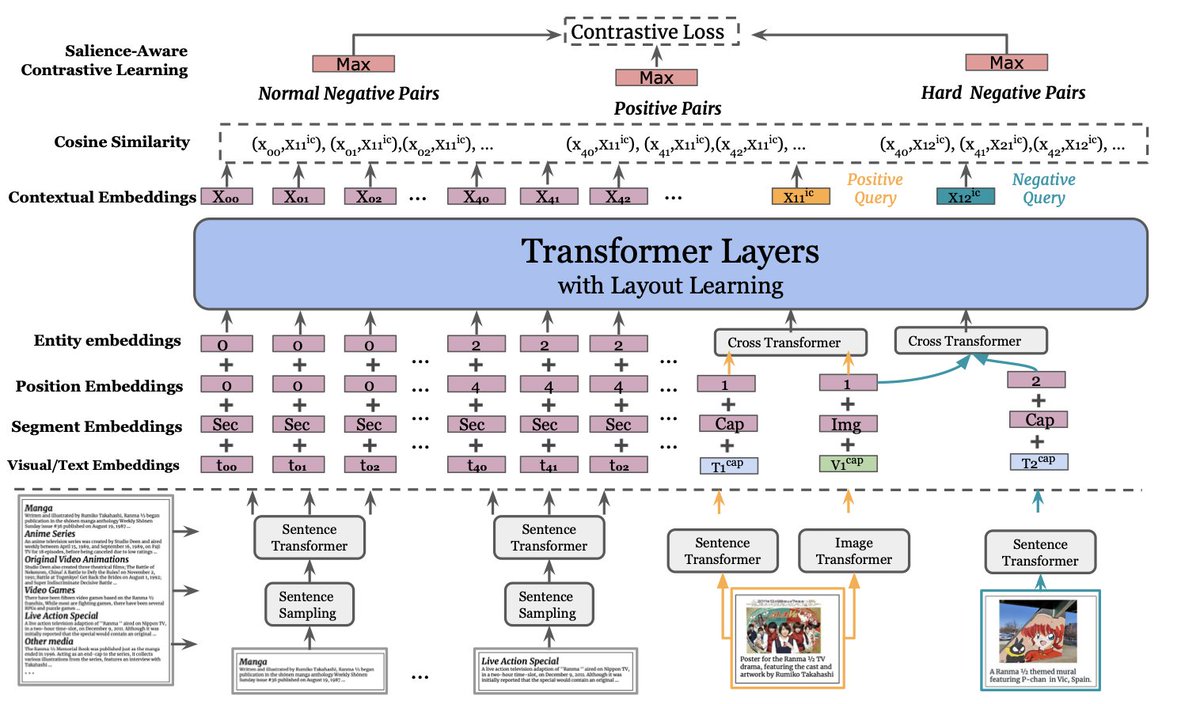

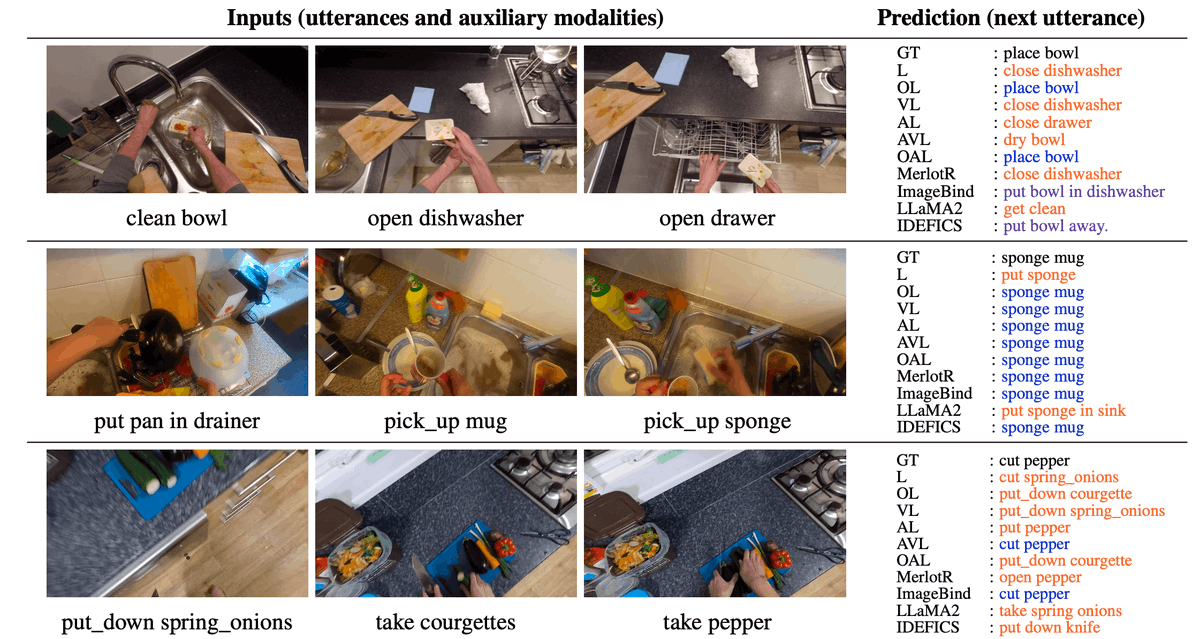

1. 🧵🎉 Excited to share that our paper 'Sequential Compositional Generalization in Multimodal Models' is accepted as a long paper at #NAACL2024 ! 🌟 We'll be presenting our findings in Mexico City this June (NAACL HLT 2024). Dive into the full details here 👇

- Paper:

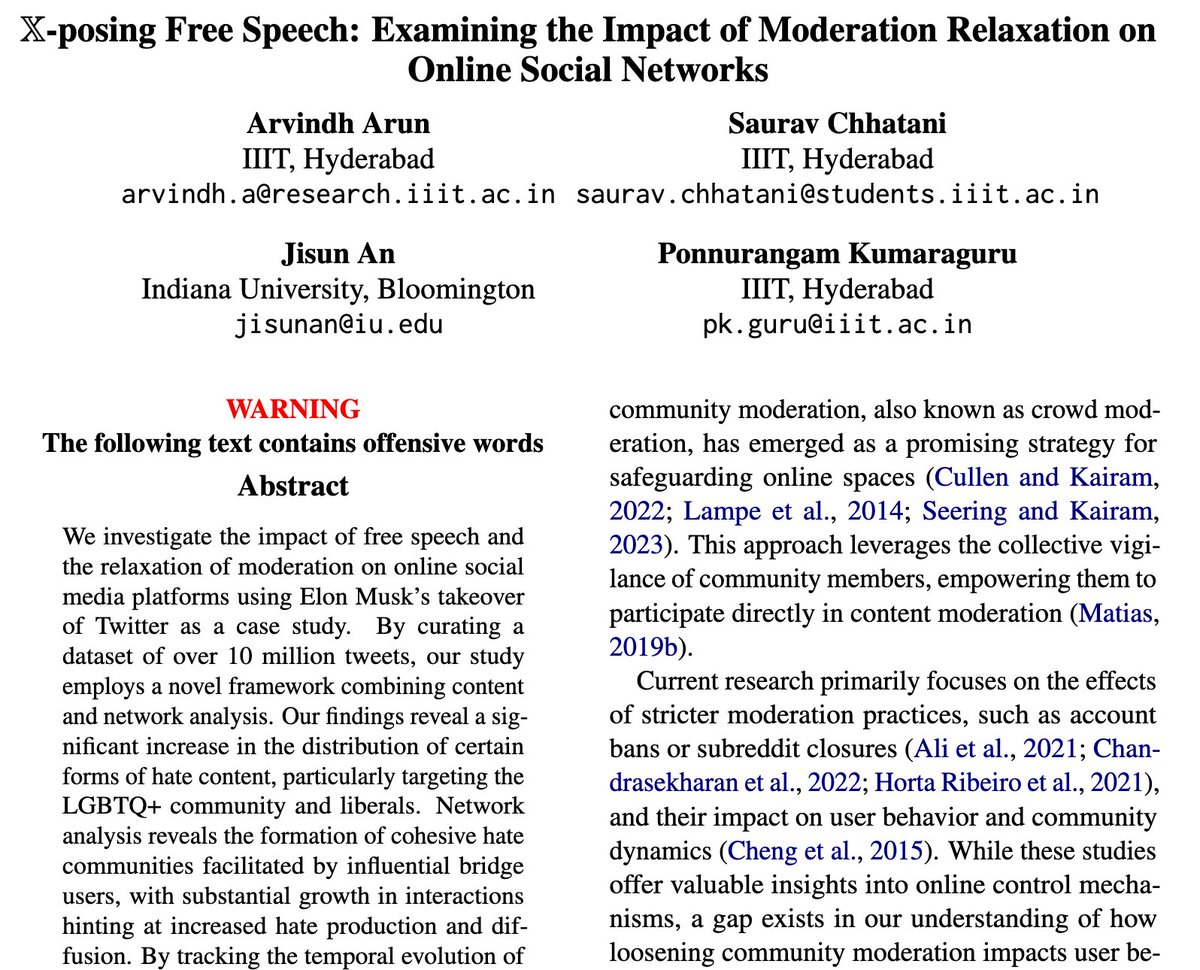

📢Our work X-posing Free Speech: Examining the Impact of Moderation Relaxation on Online Social Networks studying Elon Musk's takeover of #Twitter #X accepted Workshop on Online Abuse and Harms NAACL HLT 2024 #NAACL2024 w/ Arvindh Arun Saurav Chhatani Jisun An

📜arxiv.org/pdf/2404.11465…

Findings 🧵👇

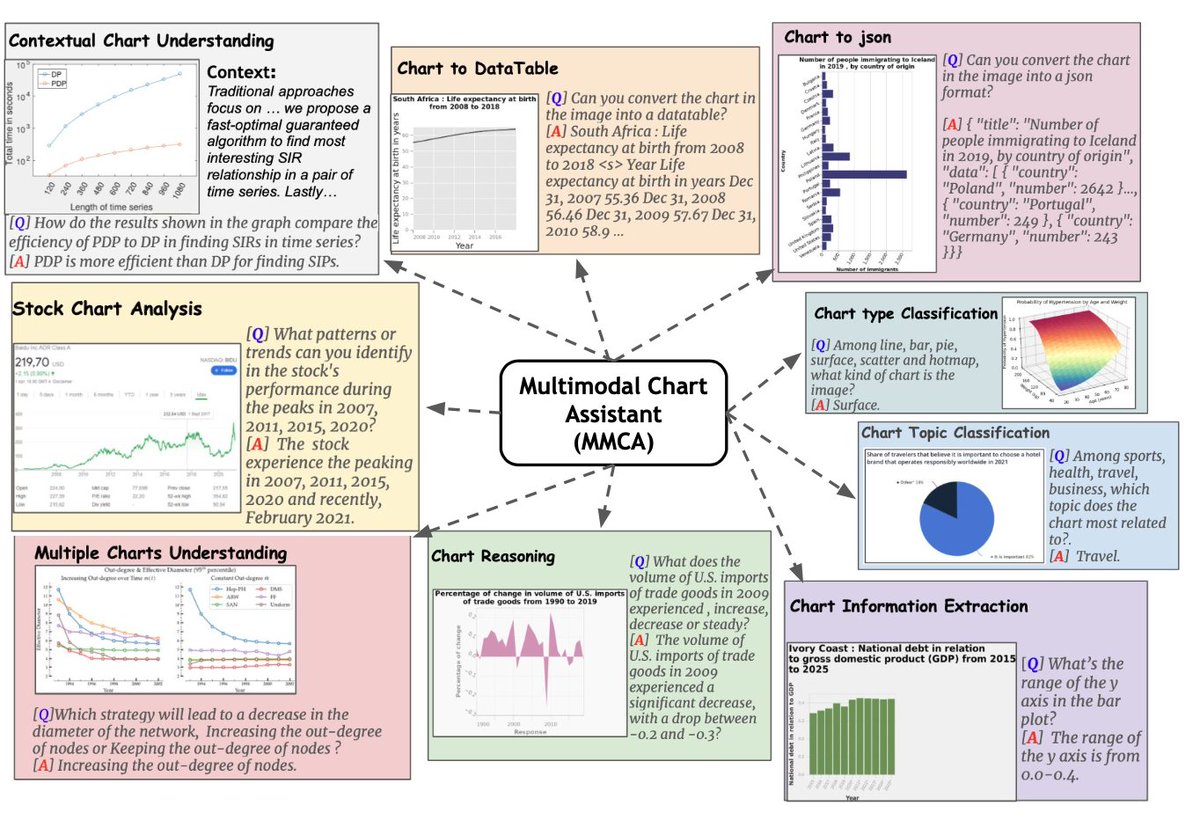

Very excited and equally proud of this work! Spent a lot of time on data collection & systematic eval of several model paradigms (sft/n-shot), with the most surprising result: complementary perf bet human & AI. Go Sanchaita Hazra!! We'll be in Mexico City, hit us up! #NAACL2024

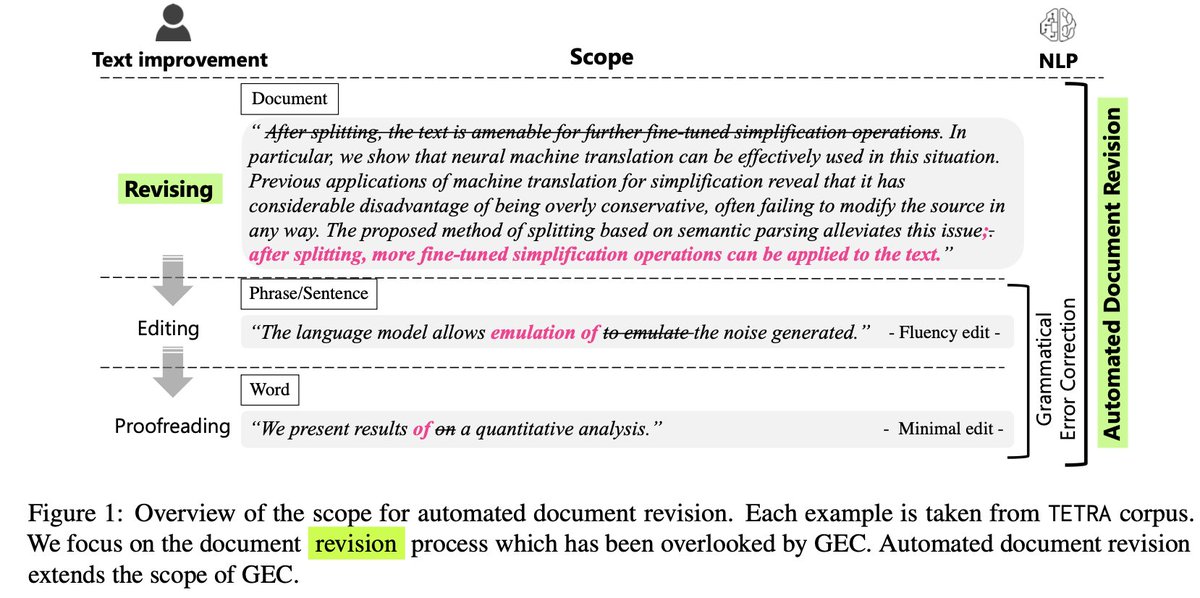

Checkout our #NAACL2024 paper on multilingual text editing 👇

We present results on Grammatical Error Correction, Text Simplification, and Paraphrasing on seven languages (Arabic, Chinese, English, German, Japanese, Korean, and Spanish)

🌟 Exciting News Alert! 🌟 Thrilled to announce our lineup of confirmed keynote speakers for the Mexican NLP Summer School 2024! 🎉

#MexicanNLPSummerSchool #NAACL2024

AMPLN

Red TTL

Performance as a function of tuning effort is called *the tuning curve*. If you haven't yet, you can learn more from the #NAACL2024 paper by He He, Kyunghyun Cho, and me: 'Show Your Work with Confidence'!

arxiv: arxiv.org/abs/2311.09480

og tweet: x.com/NickLourie/sta….

4/4

🤔 Diãcritics: Translation's Friend ôr Foe?

🚀 We are happy to share our work “Interplay of Machine Translation, Diacritics and Diacritization”, which will appear at #NAACL2024 main conference.

Diacritics are markers attached to characters (e.g. the circle on å, the hat on ê).

Our paper “Towards Automated Document Revision: Grammatical Error Correction, Fluency Edits, and Beyond” got accepted in BEA 2024 (co-located in #NAACL2024 )! w/ Keisuke Sakaguchi, Masato Hagiwara, みずもともや, Jun Suzuki, Kentaro Inui / 乾健太郎

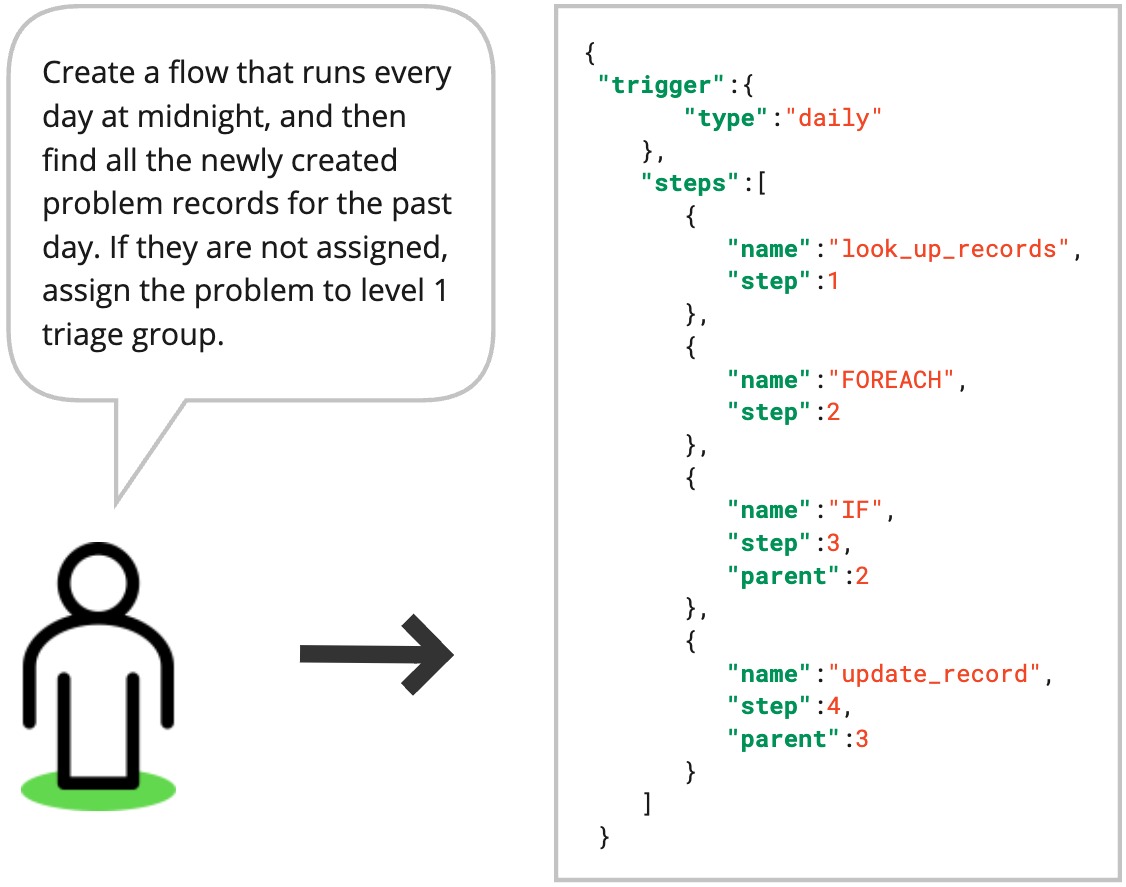

Excited to share that latest paper was accepted at #NAACL2024 Industry Track!

In this work, we tackled the challenge of hallucination for the task of text to workflow generation.

arxiv.org/abs/2404.08189🧵

Paper from The Sanghani Center at Virginia Tech accepted to the #NAACL2024 which will be held in Mexico City in June.

Authors are Mandar Rutuja Taware Pravesh Koirala Nikhil Muralidhar Naren Ramakrishnan Read about their research here: arxiv.org/pdf/2404.01536…

Haize Labs Cool stuff! I believe Maksym Andriushchenko 🇺🇦 also explored this llama3 vulnerability concurrently in the below thread and the same vulnerability for Claude models in his earlier paper about pre-filling x.com/maksym_andr/st…

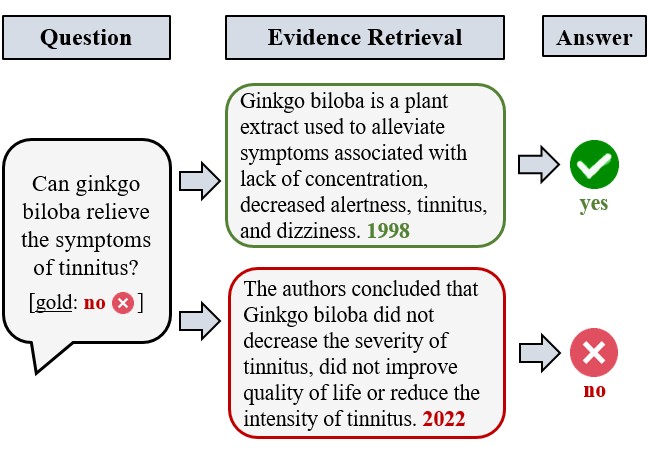

Happy to share that our paper on open-domain health question answering was accepted to Findings of #NAACL2024 ! 🇲🇽

We show that QA performance improves when retrieving fewer evidence documents and filtering them by publication year and reputation.🧮

📜: arxiv.org/abs/2404.08359

Ashwinee Panda Haize Labs Maksym Andriushchenko 🇺🇦 Ah, but I guess I didn't look closely enough, perhaps he didn't explore pre-filling in the latest attack on llama3 -- I think it mostly just reminded me of the pre-filling attack on Claude from Maksym's earlier paper arxiv.org/abs/2404.02151