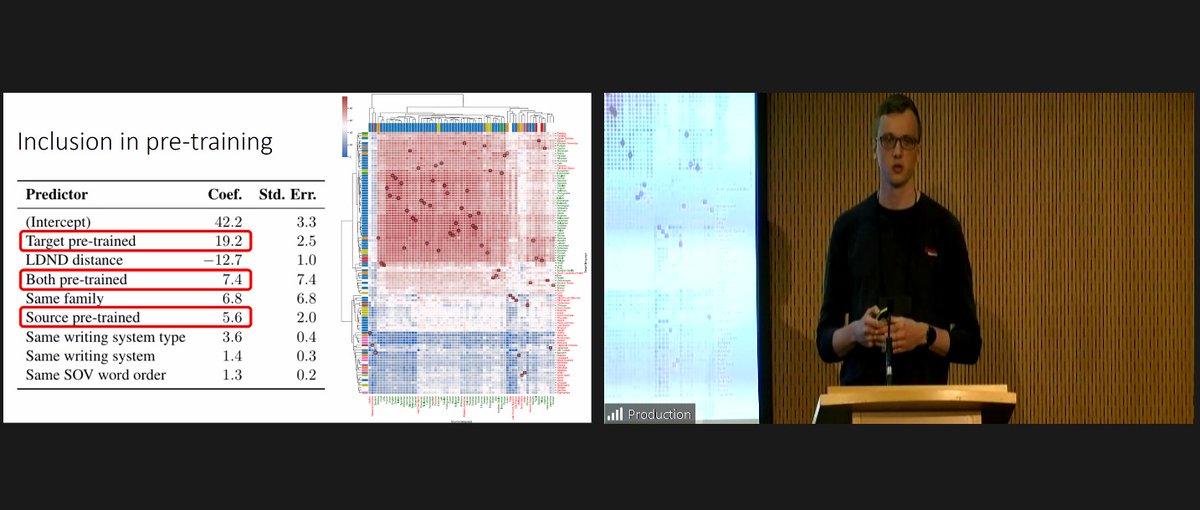

Our new @GroNLP paper w/Martijn Wieling and Malvina Nissim, about cross-lingual transfer for >100 languages is accepted by ACL 2024! We show that English is NOT the best training language and we discuss what factors do influence effective transfer. (Paper+models+demo+code) 1/n

Conference season is here!🎓 Wietse de Vries (contact me by email) just presented his paper (with Malvina Nissim & Martijn Wieling, GroNLP) on cross-lingual transfer for >100 languages at #ACL2022 (@aclmeeting). 🎉 If you missed it, you can still catch him at his poster tomorrow!

The @GroNLP team is traveling to Singapore to present 8 #EMNLP2023 Main/Findings/Workshops papers! 🐮

Meet Jirui Qi Wietse de Vries (contact me by email) Gabriele Sarti @ LREC-COLING 🇮🇹 Arianna Bisazza Khalid Al-Khatib Daniel Scalena in person and Lisa Bylinina Tommaso Caselli @[email protected] at the virtual EMNLP 2024!

Our papers ⬇️ #EMNLP23 #nlproc

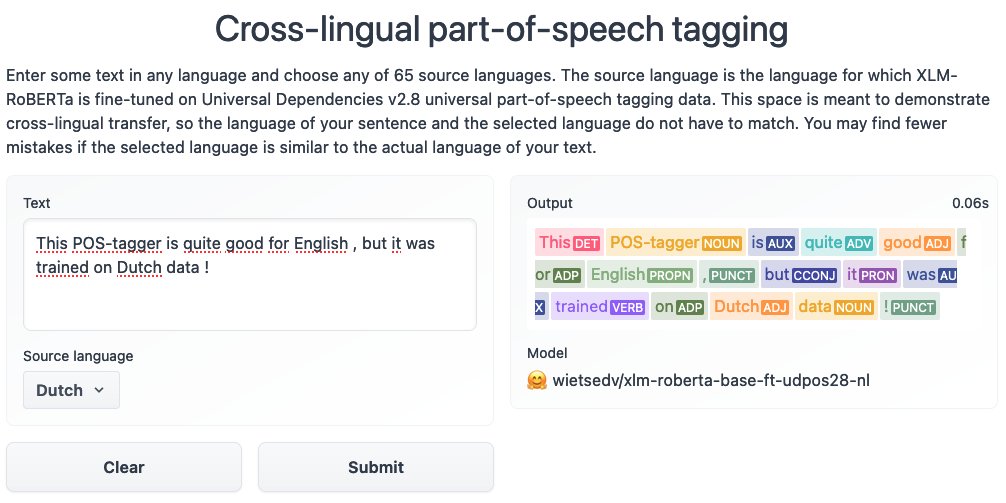

Models and a demo can be found on Hugging Face Spaces (second link). Evaluation results for each model can be found on the linked model pages.

📝 Paper: wietsedv.nl/files/devries_…

🤗 Models + Demo: hf.co/spaces/wietsed…

💻 Code: github.com/wietsedv/xpos

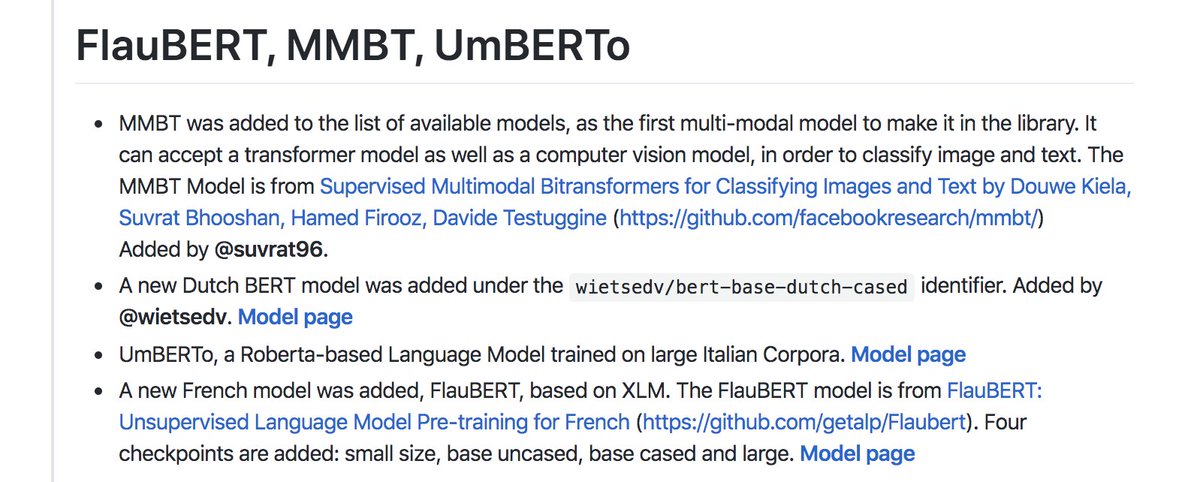

New models from:

- Wietse de Vries (contact me by email) (Dutch BERT),

- Douwe Kiela at Facebook AI (MMBT, multi-modal model)

- Hang Le, laurent besacier et al. (FlauBERT, French-trained XLM-like)

- Loreto Parisi, Simone Francia et al. at Musixmatch (UmBERTo, Italian CamemBERT-like)

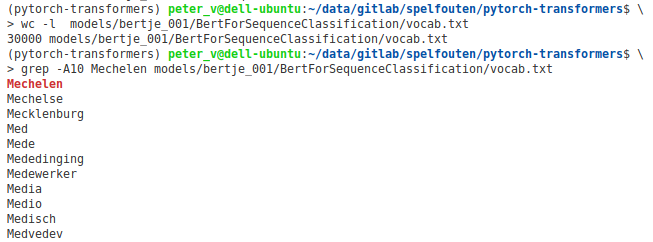

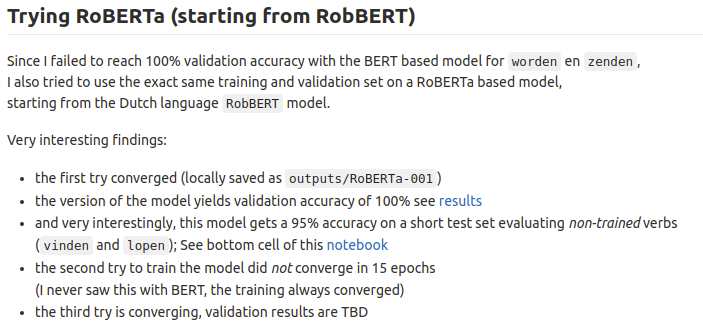

Interesting! I got blocked in reaching in 100% validation accuracy on BERTje, so I tried RobBERT, a _RoBERTa_ based model. Got to 100% validation accuracy and 95% accuracy for 'dt'-mistakes on _untrained_ verbs.

gitlab.com/spelfouten/dut…

Pieter Delobelle Wietse de Vries (contact me by email) #NLP #AI #BERT

We spraken ook even over ons project Van Old noar Jong met Scholierenacademie - Rijksuniversiteit Groningen. We ontwikkelen hierin een lespakket rondom onze onderwijsgame #Gronings (gemaakt door Wietse de Vries (contact me by email)) voor het basisonderwijs. Met dank aan #Google voor de financiering en Alfa-college voor de pilotgame!

Wietse de Vries (contact me by email) GroNLP Malvina Nissim I've been waiting for a Dutch GPT-2 model for a while now. This is a great, and computationally efficient, step in the right direction! Thanks for this new addition! (PS: great typo in the paper! :D)

Noorderzon Marleen Weggemans Zwarte Cross University of Groningen Faculty of Arts - University of Groningen Speech Lab Groningen GroNLP Teja Rebernik Raoul Buurke Hedwig Sekeres Thomas Tienkamp Martijn Bartelds Wietse de Vries (contact me by email) Masha Medvedeva Anna Pot Katharina Polsterer Inderdaad, dagelijks (t/m as dinsdag) met University of Groningen #SPRAAKLAB van 13 tot 20 uur! Speech Lab Groningen

Wietse de Vries (contact me by email) Douwe Kiela Hang Le laurent besacier Loreto Parisi Simone Francia Musixmatch Hugging Face Victor Sanh Lysandre clem 🤗 Pierric Cistac Anthony MOI Much improved adoption of modern Python best practices throughout the codebase. Thanks Lysandre, Thomas Wolf and Aymeric Augustin 💪

What's so special about BERT's layers? Joint work with Wietse de Vries (contact me by email) and Andreas on a comparison of Dutch BERTje and multilingual BERT wrt the NLP pipeline. We spot a few interesting differences between the two models. And we dig deeper into layer specialisation. 1/2

My CGTC PhD student Martijn Bartelds (co-supervised with Mark Liberman) and I talked about his #DeepLearning algorithm (joint work with PhD student Wietse de Vries (contact me by email) a.o.) to quantify acoustic pronunciation differences. It has much better performance than: frontiersin.org/articles/10.33…