Apache Parquet

@ApacheParquet

Apache Parquet is an open source, column-oriented data file format designed for efficient data storage and retrieval.

It provides high performance compression

ID:1342646282

https://parquet.apache.org 10-04-2013 19:07:49

383 Tweets

8,7K Followers

26 Following

PSA: If you use the page-level statistics in Apache Parquet please chime in on JIRA: issues.apache.org/jira/browse/PA…

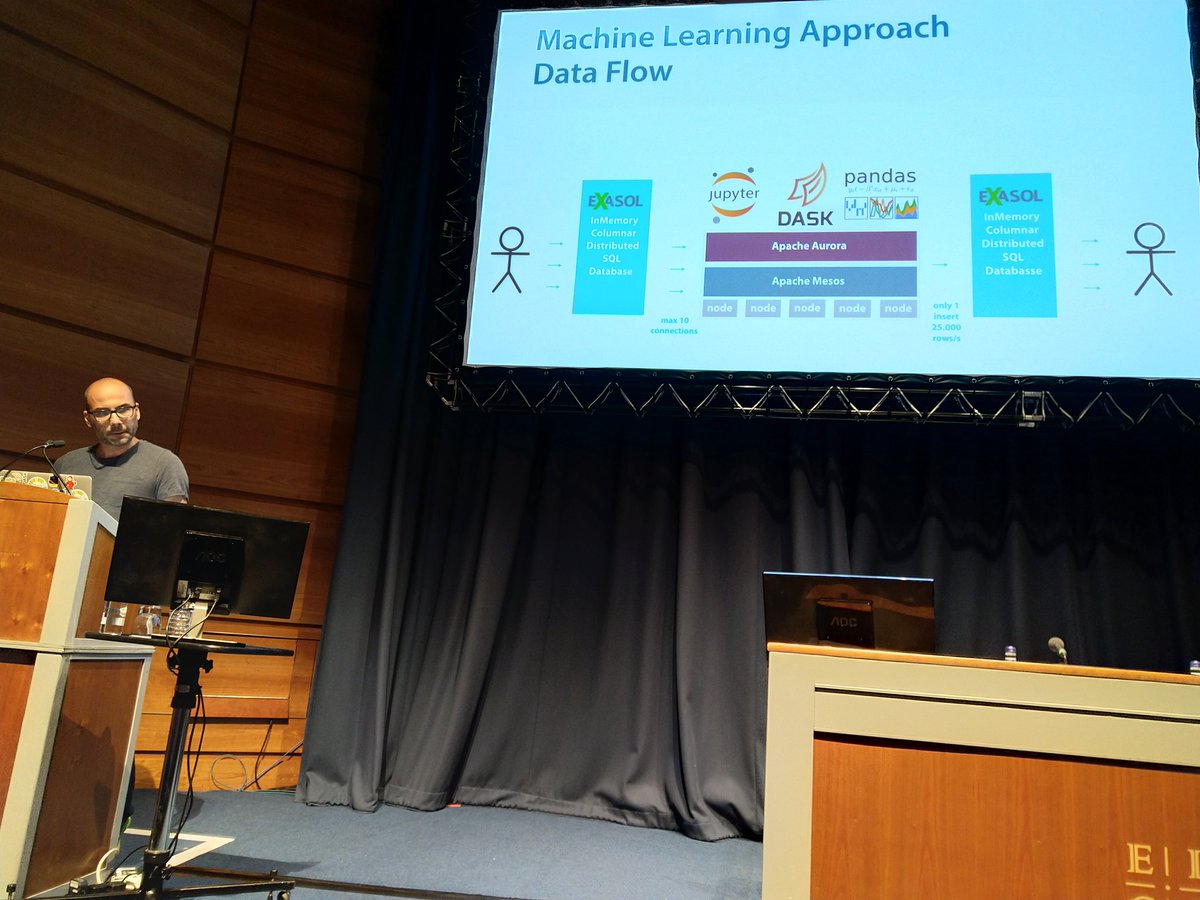

Last speaker on the #europython 's scientific room before lunch is Peter Hoffmann talking about#Pandas and #Dask to work with large datasets in Apache Parquet.

Gabor Hermann bol Apache Kafka Apache Parquet Apache Flink bol_Techlab Have a look at the Apache Flink bucketing sink rework for the upcoming release and the Parquet writer ;)

Stack Overflow Apache Spark Can someone answere this -> why is Apache Parquet format faster than other columnar storage like hbase, kudu etc? stackoverflow.com/q/48761227/318…

My talk from the DMBI 2018 Conference at Ben-Gurion University of the Negev about our journey at @Verint_Cyber to #BigData #Cyber Analytics on Apache Hadoop Apache Spark Apache Kafka Apache Parquet is available at youtube.com/watch?v=nh-JyY… . Thanks everyone for attending!

In one month from now I'll be speaking on Verint big data journey with Apache Spark Apache Kafka and Apache Parquet at the #StrataData Conference in London. If you're there, drop by! conferences.oreilly.com/strata/strata-…

2nd #PyData LDN #keynote - holden karau & Boo (Programmer) walk us through a zoo of #tools for #BigData & #distributed #data in #Python : #Apache #Spark , #PySpark , #Arrow , #Beam , #Parquet & #Dask

Apache Spark ApacheArrow Apache Beam Apache Parquet Dask

#PyData PyData NumFOCUS

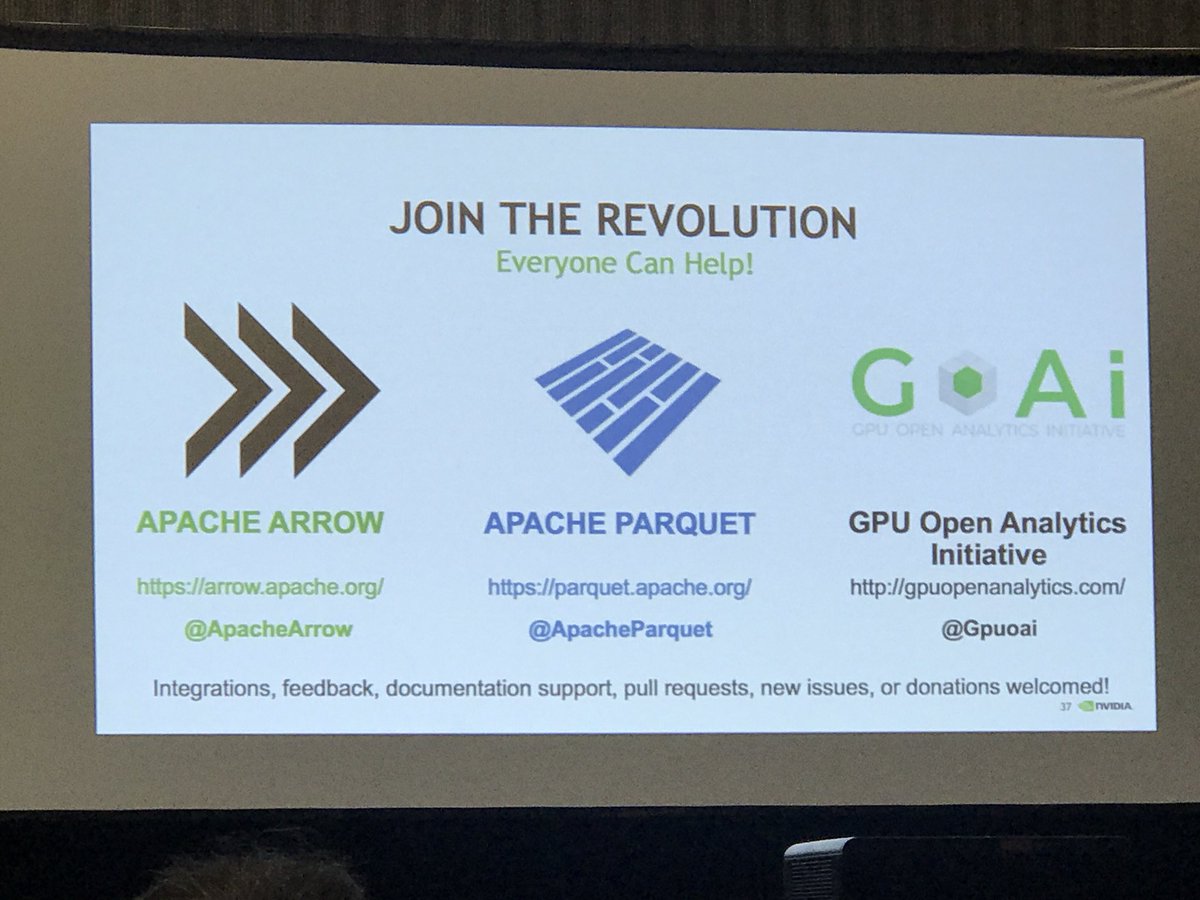

Join the #GPU accelerated #analytics and #ML revolution. ApacheArrow Apache Parquet and GPU Open Analytics Initiative #GTC18

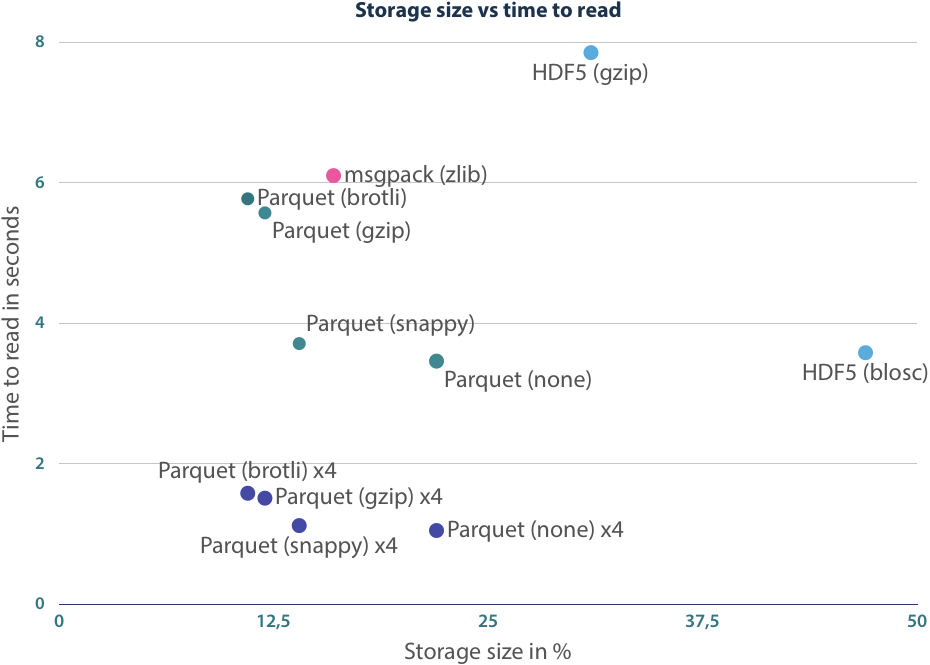

Great benchmark between Apache Parquet on #hdfs and Apache Kudu blog.clairvoyantsoft.com/guide-to-using…

In short kudu is faster than Parquet for random access Querys like CRUD operations but slower for analytics queries.

You do not need Spark to create Apache Parquet files, you can use plain Java and it can even fit in AWS Lambda for a serverless solution:

engineering.opsgenie.com/analyzing-aws-…

I'll be speaking at #StrataData Conference this May in London, and share our journey in one of our many #BigData adventures with Apache Spark. You're all invited! conferences.oreilly.com/strata/strata-… Apache Hadoop Apache Kafka Apache Parquet O'Reilly Strata Data & AI Conference

Apache Parquet ApacheArrow Also the file size went down from 10Gigs to 3Gigs without any compression.

Working with a 10Gig csv data. Pandas read_csv took 16mins to load the csv into memory. Converted to Apache Parquet with ApacheArrow. It took 30 secs to read into pyarrow table and 16 sec to convert to pandas dataframe.

16mins => 46sec!

tech.blue-yonder.com/efficient-data…

Come hear me talk about ApacheArrow and Apache Parquet at #NABDConf in Palo Alto next Tuesday! x.com/jqcoffey/statu…

At IEEE/ACM UCC/BDCAT today in #Austin presenting our work with Plantbreeding WUR on managing #agri #genomic #bigdata with Apache Spark and Apache Parquet

I'm proud to have shared the stage with Doug Cutting for this podcast on serialization formats. A great pleasure.

x.com/dataengpodcast…